6.6 Complex research designs

- Last updated

- Save as PDF

- Page ID

- 104130

In Chapter 5 and in this chapter so far, we have restricted our discussion to the simplest possible research designs – cases where we are dealing with two variables with two values each. To conclude our discussion of statistical hypothesis testing, we will look at two cases of more complex designs – one with two variables that each have more than two values, and one with more than two variables.

6.6.1 Variables with more than two values

n our case studies involving the English possessive constructions, the dependent variable (TYPE OF) POSSESSIVE was treated as binary – we assumed that it had two values, S -POSSESSIVE and OF -POSSESSIVE. The dependent variables were more complex: the cardinal variable LENGTH obviously has a potentially infinite number of values and the ordinal variable ANIMACY was treated as having ten values in our annotation scheme. The nominal value DISCOURSE STATUS, was treated like a binary variable (although potentially it has an infinite number of values, too).

Frequently, perhaps even typically, corpus linguistic research questions will be more complex, and we will be confronted with designs where both the dependent and the independent variable will have (or be treated as having) more than two values. Since we are most likely to deal with nominal variables in corpus linguistics, we will discuss in detail an example where both variables are nominal.

In the preceding chapters we treated as S -POSSESSIVE constructions where the modifier is a possessive pronoun as well as constructions where the modifier is a proper name or a noun with a possessive clitic. Given that the proportion of pronouns and nouns in general varies across language varieties (Biber et al. 1999), we might be interested to see whether the same is true for these three variants of the s -possessive. Our dependent variable MODIFIER OF s -POSSESSIVE would then have three values. The independent variable VARIETY, being heavily dependent on the model of language varieties we adopt – or rather, on the nature of the text categories included in our corpus – has an indefinite number of values. To keep things simple, let us distinguish just four broad text categories recognized in the British National Corpus (and many other corpora): SPOKEN, FICTION, NEWSPAPER, and ACADEMIC. This gives us a four-by-three design.

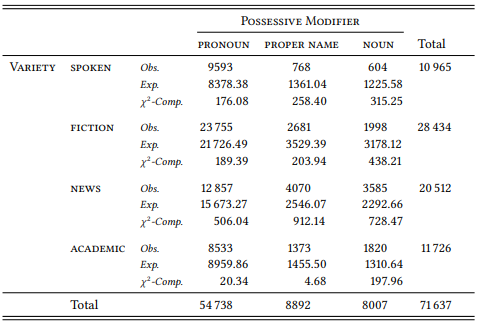

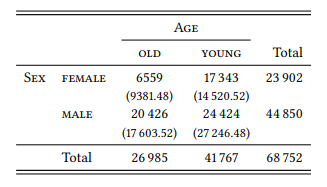

Searching the BNC Baby for words tagged as possessive pronouns and for words tagged unambiguously as proper names or common nouns yields the observed frequencies shown in the first line of each row in Table 6.14.

Table 6.14: Types of modifiers in the s-possessive in different text categories

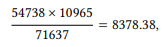

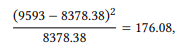

The expected frequencies and the χ2 components are arrived at in the same way as for the two-by-two tables in the preceding chapter. First, for each cell, the sum of the column in which the cell is located is multiplied by the sum of the row in which it is located, and the result is divided by the table sum. For example, for the top left cell, we get the expected frequency

the expected frequencies are shown in the second line of each cell. Next, for each cell, we calculate the χ2 component. For example, for the top left cell, we get

the corresponding values are shown in the third line of each cell. Adding up the individual χ2components gives us a χ2 value of 3950.89.

Using the formula given in (5) above, Table 6.14 has (4−1) × (3−1) = 6 degrees of freedom. As the χ2 table in Section 14.1, the required value for a significance level of 0.001 at 6 degrees of freedom is 22.46; the χ2 value for Table 6.14 is much higher than this, thus, our results are highly significant. We could summarize our findings as follows: “The frequency of pronouns, proper names and nouns as modifiers of the s -possessive differs highly significantly across text categories (χ2 = 473.73, df = 12, p < 0.001)”.

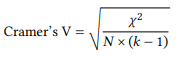

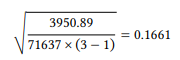

Recall that the mere fact of a significant association does not tell us anything about the strength of that association – we need a measure of effect size. In the preceding chapter, ϕ was introduced as an effect size for two-by-two tables (see 7). For larger tables, there is a generalized version of ϕ, referred to as Cramer’s V (or, occasionally, as Cramer’s ϕ or ϕ ′ ), which is calculated as follows (N is the table sum, k is the number of rows or columns, whichever is smaller):

(20)

For our table, this gives us:

Recall that the square of a correlation coefficient tells us the proportion of the variance captured by our design, which, in this case, is 0.0275. In other words, VARIETY explains less than three percent of the distribution of s -possessor modifier types across language varieties; or “This study has shown a very weak but highly significant influence of language variety on the realization of s -possessor modifiers as pronouns, proper names or common nouns (χ2 = 473.73, df = 12, p < 0.001, r = 0.0275).”

Despite the weakness of the effect, this result confirms our expectation that general preferences for pronominal vs. nominal reference across language varieties is also reflected in preferences for types of modifiers in the s -possessive. However, with the increased size of the contingency table, it becomes more difficult to determine exactly where the effect is coming from. More precisely, it is no longer obvious at a glance which of the intersections of our two variables contribute to the overall significance of the result in what way and to what extent.

To determine in what way a particular intersection contributes to the overall result, we need to compare the observed and expected frequencies in each cell. For example, there are 9593 cases of s -possessives with pronominal modifiers in spoken language, where 8378.38 are expected, showing that pronominal modifiers are more frequent in spoken language than expected by chance. In contrast, there are 8533 such modifiers in academic language, where 8959.86 are expected, showing that they are less frequent in academic language than expected by chance. This comparison is no different from that which we make for two-by-two tables, but with increasing degrees of freedom, the pattern becomes less predictable. It would be useful to visualize the relation between observed and expected frequencies for the entire table in a way that would allow us to take them in at a glance

To determine to what extent a particular intersection contributes to the overall result, we need to look at the size of the χ2 components – the larger the component, the greater its contribution to the overall χ2 value. In fact, we can do more than simply compare the χ2 components to each other – we can determine for each component, whether it, in itself, is statistically significant. In order to do so, we first imagine that the large contingency table (in our case, the 4-by-3 table) consists of a series of tables with a single cell each, each containing the result for a single intersection of our variables.

We now treat the χ2 component as a χ2 value in its own right, checking it for statistical significance in the same way as the overall χ2 value. In order to do so, we first need to determine the degrees of freedom for our one-cell tables – obviously, this can only be 1. Checking the table of critical χ2 values in Section 14.1, we find, for example, that the χ2 component for the intersection PRONOUN ∩ SPOKEN, which is 176.08, is higher than the critical value 10.83, suggesting that this intersection’s contribution is significant at p < 0.001.

However, matters are slightly more complex: by looking at each intersection separately, we are essentially treating each cell as an independent result – in our case, it is as if we had performed twelve tests instead of just one. Now, recall that levels of significance are based on probabilities of error – for example, p = 0.05 means, roughly, that there is a five percent likelihood that a result is due to chance. Obviously, the more tests we perform, the more likely it becomes that one of the results will, indeed, be due to chance – for example, if we had performed twenty tests, we would expect one of them to yield a significant result at the 5-percent level, because 20 × 0.05 = 1.00.

To avoid this situation, we have to correct the levels of significance when performing multiple tests on the same set of data. The simplest way of doing so is the so-called Bonferroni correction, which consists in dividing the conventionally agreed-upon significance levels by the number of tests we are performing. In the case of Table 6.14, this means dividing them by twelve, giving us significance levels of 0.05/12 = 0.004167 (significant), 0.01/12 = 0.000833 (very significant), and 0.001/12 = 0.000083 (highly significant).6 Our table does not give the critical χ2 - values for these levels, but the value for the the intersection PRONOUN ∩ SPOKEN, 176.08, is larger than the value required for the next smaller level (0.00001, with a critical value of 24.28), so we can be certain that the contribution of this intersection is, indeed, highly significant. Again, it would be useful to summarize the degrees of significance in such a way that they can be assessed at a glance.

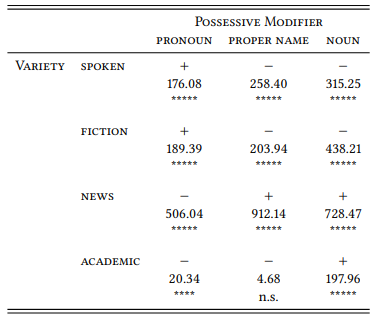

There is no standard way of representing the way in, and degree to, which each cell of a complex table contributes to the overall result, but the representation in Table 6.15 seems reasonable: in each cell, the first line contains either a plus (for “more frequent than expected”) or a minus (for “less frequent than expected”); the second line contains the χ2 -component, and the third line contains the (corrected) level of significance (using the standard convention of representing them by asterisks – one for each level of significance).

This table presents the complex results at a single glance; they can now be interpreted. Some patterns now become obvious: For example, spoken language and fiction are most similar to each other – they both favor pronominal modifiers, while proper names and common nouns are disfavored, and the χ2 -components for these preferences are very similar. Also, if we posit a kind of gradient of referent familiarity from pronouns over proper names to nouns, we can place spoken language and fiction at one end, academic language at the other, and newspaper language somewhere in the middle.

6.6.2 Designs with more than two variables

Note that from a certain perspective the design in Table 6.15 is flawed: the variable VARIETY actually conflates at least two variables that are theoretically independent: MEDIUM (with the variables SPOKEN and WRITTEN, and DISCOURSE DOMAIN (in our design with the variables NEWS (RECOUNTING OF ACTUAL EVENTS), FICTION (RECOUNTING OF IMAGINARY EVENTS) and ACADEMIC (RECOUNTING OF SCIENTIFIC IDEAS, PROCEDURES, AND RESULTS). These two variables are independent in that there is both written and spoken language to be found in each of these discourse domains. They are conflated in our variable VARIETY in that one of the four values is spoken and the other three are written language, and in that the text category SPOKEN is not differentiated by topic. There may be reasons to ignore this conflation a priori, as we have done – for example, our model may explicitly assume that differentiation by topic happens only in the written domain. But even then, it would be useful to treat MEDIUM and DISCOURSE DOMAIN as independent variables, just in case our model is wrong in assuming this.

Table 6.15: Types of modifiers in the s -possessive in different text categories: Contributions of the individual intersections

In contrast to all examples of research designs we have discussed so far, which involved just two variables and were thus bivariate, this design would be multivariate: there is more than one independent variable whose influence on the dependent variable we wish to assess. Such multivariate research designs are often useful (or even necessary) even in cases where the variables in our design are not conflations of more basic variables.

In the study of language use, we will often – perhaps even typically – be confronted with a fragment of reality that is too complex to model in terms of just two variables.

In some cases, this may be obvious from the outset: we may suspect from previous research that a particular linguistic phenomenon depends on a range of factors, as in the case of the choice between the s - and the of -possessive, which we saw in the preceding chapters had long been hypothesized to be influenced by the animacy, the length and/or the givenness of the modifier.

In other cases, the multivariate nature of the phenomenon under investigation may emerge in the course of pursuing an initially bivariate design. For example, we may find that the independent variable under investigation has a statistically significant influence on our dependent variable, but that the effect size is very small, suggesting that the distribution of the phenomenon in our sample is conditioned by more than one influencing factor.

Even if we are pursuing a well-motivated bivariate research design and find a significant influence with a strong effect size, it may be useful to take additional potential influencing factors into account: since corpus data are typically unbalanced, there may be hidden correlations between the variable under investigation and other variables, that distort the distribution of the phenomenon in a way that suggests a significant influence where no such influence actually exists.

The next subsection will use the latter case to demonstrate the potential shortcomings of bivariate designs and the subsection following it will present a solution. Note that this solution is considerably more complex than the statistical procedures we have looked at so far and while it will be presented in sufficient detail to enable the reader in principle to apply it themselves, some additional reading will be highly advisable.

6.6.2.1 A danger of bivariate designs

In recent years, attention has turned to sociolinguistic factors potentially influencing the choice between the s -possessive and the of -construction. It has long been known that the level of formality has an influence (Jucker 1993, also Grafmiller 2014), but recently, more traditional sociolinguistic variables like Sex and Age have been investigated. The results suggest that the latter has an influence, while the former does not – for example, Jankowski & Tagliamonte (2014) find no influence of sex, but find that age has an influence under some conditions, with young speakers using the s-genitive more frequently than old speakers for organizations and places; Shih et al. (2015) find a similar, more general influence of age.

Let us take a look at the influence of SEX and AGE on the choice between the two possessives in the spoken part of the BNC. Since it is known that women tend to use pronouns more than men do (see Case Study 10.2.3.1 in Chapter 10), let us exclude possessive pronouns and operationalize the S -POSSESSIVE as “all tokens tagged POS in the BNC”, which will capture the possessive clitic ’s and zero possessives (on common nouns ending in alveolar fricatives). Since the spoken part of the BNC is too large to identify of -possessives manually, let us operationalize them somewhat crudely as “all uses of the preposition of ”; this encompasses not just of -possessives, but also the quantifying and partitive of -constructions that we manually excluded in the preceding chapters, the complementation of adjectives like aware and afraid, verbs like consist and dispose, etc. On the one hand, this makes our case study less precise, on the other hand, any preference for of - constructions may just be a reflex of a general preference for the preposition of, in which case we would be excluding relevant data by focusing on of -constructions. Anyway, our main point will be one concerning statistical methodology, so it does not matter too much either way.

So, let us query all tokens tagged as possessives (POS) or the preposition of (PRF) in the spoken part of the BNC, discarding all hits for which the information about speaker sex or speaker age is missing. Let us further exclude the age range 0–14, as it may include children who have not fully acquired the grammar of the language, and the age range 60+ as too unspecific. To keep the design simple, let us recode all age classes between 15 and 44 years of age as YOUNG and the age range 45–59 als OLD (I fall into the latter, just in case someone thinks this category label discriminates people in their prime). Let us further accept the categorization of speakers into male and female that the makers of the BNC provide.

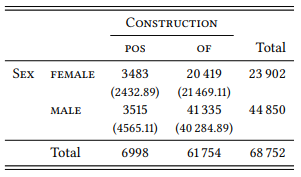

Table 6.16 shows the intersections of CONSTRUCTION and SEX in the results of this query.

Unlike the studies mentioned above, we find a clear influence of SEX ON CONSTRUCTION , with female speakers preferring the s -possessive and male speakers preferring the of -construction(s). The difference is highly significant, although the effect size is rather weak (χ2 = 773.55, df = 1, p < 0.001, ϕ = 0.1061).

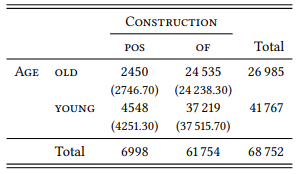

Next, let us look at the intersections of CONSTRUCTION and SEX in the results of our query, which are shown in Table 6.17.

Like previous studies, we find a significant effect of age, with younger speakers preferring the s -possessive and older speakers preferring the of -construction(s). Again, the difference is highly significant, but the effect is extremely weak (χ2 = 58.73, df = 1, p < 0.001, ϕ = 0.02922).

We might now be satisfied that both speaker age and speaker sex have an influence on the choice between the two constructions. However, there is a potential problem that we need to take into account: the values of the variables SEX and AGE and their intersections are not necessarily distributed evenly in the subpart of the BNC used here; although the makers of the corpus were careful to include a broad range of speakers of all ages, sexes (and class memberships, ignored in our study), they did not attempt to balance all these demographic variables, let alone their intersections. So let us look at the intersection of SEX and AGE in the results of our query. These are shown in Table 6.18.

Table 6.16: The influence of SEX on the choice between the two possessives

Table 6.17: The influence of AGE on the choice between the two possessives

There are significantly fewer hits produced by old women and significantly more produced by young women in our sample, and, conversely, significantly fewer hits produced by young men and significantly more produced by old men. This overrepresentation of young women and old men is not limited to our sample, but characterizes the spoken part of the BNC in general, which should intrigue feminists and psychoanalysts; for us, it suffices to know that the asymmetries in our sample are highly significant, with an effect size larger than that of that in the preceding two tables (χ2 = 2142.72, df = 1, p < 0.001, ϕ = 0.1765).

Table 6.18: SEX and AGE in the BNC

This correlation in the corpus of OLD and MALE on the one hand and YOUNG and FEMALE on the other may well be enough to distort the results such that a linguistic behavior typical for female speakers may be wrongly attributed to young speakers (or vice versa), and, correspondingly, a linguistic behavior typical for male speakers may be wrongly interpreted to old speakers (or vice versa). More generally, the danger of bivariate designs is that a variable we have chosen for investigation is correlated with one or more variables ignored in our research design, whose influence thus remains hidden. A very general precaution against this possibility is to make sure that the corpus (or our sample) is balanced with respect to all potentially confounding variables. In reality, this is difficult to achieve and may in fact be undesirable, since we might, for example, want our corpus (or sample) to reflect the real-world correlation of speaker variables).

Therefore, we need a way of including multiple independent variables in our research designs even if we are just interested in a single independent variable, but all the more so if we are interested in the influence of several independent variables. It may be the case, for example, that both SEX and AGE influence the choice between ’s and of, either in that the two effects add up, or in that they interact in more complex ways.

6.6.2.2 Configural frequency analysis

There is a range of multivariate statistical methods that are routinely used in corpus linguistics, such as the ANOVA mentioned at the end of the previous chapter for situations where the dependent variable is measured in terms of cardinal numbers, and various versions of logistic regression for situations where the dependent variable is ordinal or nominal.

In this book, I will introduce multivariate designs using Configural Frequency Analysis (CFA), a straightforward extension of the χ2 test to designs with more an two nominal variables. This method has been used in psychology and psychiatry since the 1970s, and while it has never become very wide-spread, it has, in my opinion, a number of didactic advantages over other methods, when it comes to understanding multivariate research designs. Most importantly, it is conceptually very simple (if you understand the χ2 test, you should be able to understand CFA), and the results are very transparent (they are presented as observed and expected frequencies of intersections of variables.

This does not mean that CFA is useful only as a didactic tool – it has been applied fruitfully to linguistic research issues, for example, in the study of language disorders (Lautsch et al. 1988), educational linguistics (Fujioka & Kennedy 1997), psycholinguistics (Hsu et al. 2000) and social psychology (Christmann et al. 2000). An early suggestion to apply it to corpus data is found in Schmilz (1983), but the first actual such applications that I am aware of are Gries (2002; 2004). Since Gries introduced the method to corpus linguistics, it has become a minor but nevertheless well-established corpus-linguistic research tool for a range of linguistic phenomena (see, e.g., Stefanowitsch & Gries 2005; 2008; Liu 2010; Goschler et al. 2013; Hoffmann 2014; Hilpert 2015; and others).

As hinted at above, in its simplest variant, configural frequency analysis is simply a χ2 test on a contingency table with more than two dimensions. There is no logical limit to the number of dimensions, but if we insist on calculating this statistic manually (rather than, more realistically, letting a specialized software package do it for us), then a three-dimensional table is already quite complex to deal with. Thus, we will not go beyond three dimensions here or in the case studies in the second part of this book.

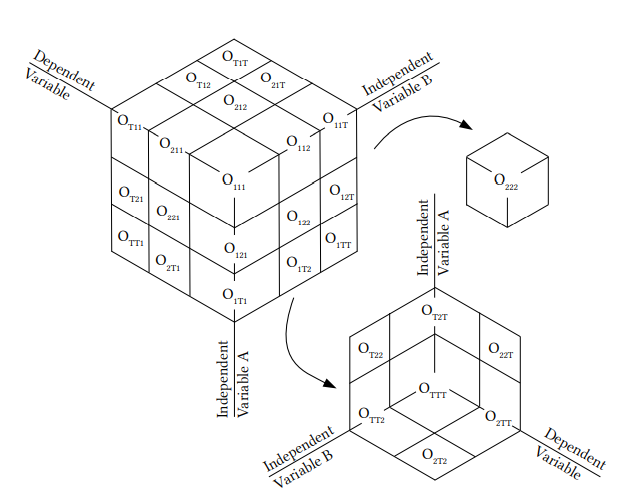

A three-dimensional contingency table would have the form of a cube, as shown in Figure 6.2. The smaller cube represents the cells on the far side of the big cube seen from the same perspective and the smallest cube represents the cell in the middle of the whole cube). As before, cells are labeled by subscripts: the first subscript stands for the values and totals of the dependent variable, the second for those of the first independent variable, and the third for those of the second independent variable.

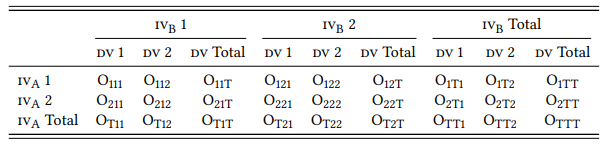

While this kind of visualization is quite useful in grasping the notion of a three-dimensional contingency table, it would be awkward to use it as a basis for recording observed frequencies or calculating the expected frequencies. Thus, a possible two-dimensional representation is shown in Table 6.19. In this table, the first independent variable is shown in the rows, and the second independent variable is shown in the three blocks of three columns (these may be thought of as three “slices” of the cube in Figure 6.2), and the dependent variable is shown in the columns themselves.

Figure 6.2: A three-dimensional contingency table

Table 6.19: A two-dimensional representation of a three-dimensional contingency table

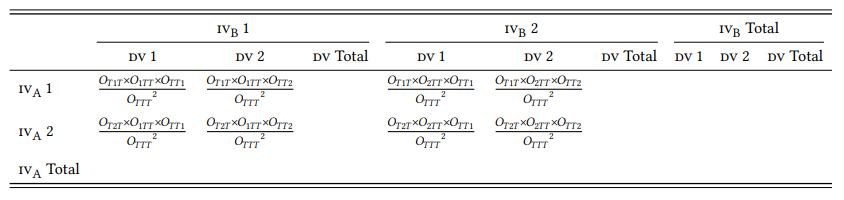

Given this representation, the expected frequencies for each intersection of the three variables can now be calculated in a way that is similar (but not identical) to that used for two-dimensional tables. Table 6.20 shows the formulas for each cell as well as those marginal sums needed for the calculation.

Table 6.20: Calculating expected frequencies in a three-dimensional contingency table

Once we have calculated the expected frequencies, we proceed exactly as before: we derive each cell’s χ2 component by the standard formula (O−E )2 /E and then add up these components to give us an overall χ2 value for the table, which can then be checked for significance. The degrees of freedom of a three-dimensional contingency table are calculated by the following formula (where k is the number of values of each variable and the subscripts refer to the variables themselves):

(21) df = (k1 × k2 × k3 ) − (k1 + k2 + k3 ) + 2

In our case, each variable has two values, thus we get(2×2×2)−(2+2+2)+2 = 4. More interestingly, we can also look at the individual cells to determine whether their contribution to the overall value is significant). In this case, as before, each cell has one degree of freedom and the significance levels have to be adjusted for multiple testing. In CFA, an intersection of variables whose observed frequency is significantly higher than expected is referred to as a type and one whose observed frequency is significantly lower is referred to as an antitype (but if we do not like this terminology, we do not have to use it and can keep talking about “more or less frequent than expected”, as we do with bivariate χ2 tests)

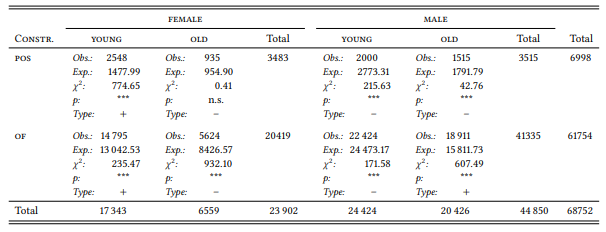

Let us apply this method to the question described in the previous sub-section. Table 6.21 shows the observed and expected frequencies of the two possessive constructions by SPEAKER AGE and SPEAKER SEX, as well as the corresponding χ2 components.7 For expository purposes, the table also shows for each cell the significance level of these components (which is “highly significant” for almost all of them), and the direction of deviation from the expected frequencies (i.e., whether the intersection is a “type”, marked by a plus sign, or an “antitype”, marked by a minus sign).

Table 6.21: Sex, Age and Possessives Multivariate (BNC Spoken)

Adding up the χ2 components yields an overall χ2 value of 2980.10, which, at four degrees of freedom, is highly significant. This tells us something we already expected from the individual pairwise comparisons of the three variables in the preceding section: there is a significant relationship among them. Of course, what we are interested in is what this relationship is and to answer this question, we need to look at the contributions to χ2 .

The result is very interesting. A careful inspection of the individual cells shows that age does not, in fact, have a significant influence. Young women use the s -possessive more frequently than expected and old women use it less (in the latter case, non-significantly), but young women also use the of -constructions significantly more frequently than expected and old women use it less. Crucially, young men use the s -possessive less frequently than expected, and old men use it more, but young men also use the of -construction less frequently than expected and old men use it more.

In other words, young women and old men use more of both constructions than young men and old women. A closer look at the contributions to χ2 tells us that SEX, however, does still have an influence on the choice between the two constructions even when AGE is taken into account: for young women, the overuse of the s -possessive is more pronounced than that of the of -construction, while for old women, underuse of the s -possessive is less pronounced than underuse of the of -construction. In other words, taking into account that old women are underrepresented in the corpus compared to young women, there is a clear preference of all women for the s -possessive. Conversely, young men’s underuse of the s -possessive is less pronounced than that of the of -construction, while old men’s overuse of the s -possessive is less pronounced than their overuse of the of -construction. In other words, taking into account than young men are underrepresented in the corpus compared to old men, there is a clear preference of all men for the of -construction.

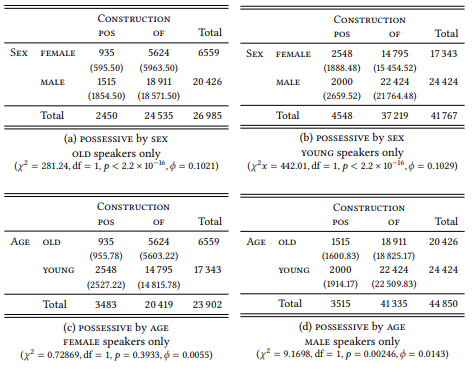

Armed with this new insight from our multivariate analysis, let us return to bivariate analysis, looking at each of the two variables while keeping the other constant. Table 6.22 shows the results of the four bivariate analysis this yields. Since we are performing four tests on the same set of data within the same research question, we have to correct for multiple testing by dividing the usual critical p -values by four, giving us p < 0.0125 for “significant”, p < 0.0025 for “very significant” and p < 0.00025 for “highly significant”. The exact p -values for each table are shown below, as is the effect size.

Table 6.22: Possessives, age and sex (BNC Spoken)

Tables 6.22a and 6.22b show that the effect of SEX on the choice between the two constructions is highly significant both in the group of OLD speakers and in the group of YOUNG speakers, with effect sizes similar to those we found for the bivariate analysis for SPEAKER SEX in Table 6.16 in the preceding section. This effect seems to be genuine, or at least, it is not influenced by the hidden variable AGE (it may be influenced by CLASS or some other variable we have not included in our design).

In contrast, Tables 6.22a and 6.22b show that the effect of AGE that we saw in Table 6.17 in the preceding section disappears completely for women, with a p -value not even significant at uncorrected levels of significance. For men, it is still discernible, but only barely, with a p -value that indicates a very significant relationship at corrected levels of significance, but with an effect size that is close to zero. In other words, it may not be a genuine effect at all, but simply a consequence of the fact that the intersections of AGE and SEX are unevenly distributed in the corpus.

This section is intended to impress on the reader one thing: that looking at one potential variable influencing some phenomenon that we are interested in may not be enough. Multivariate research designs are becoming the norm rather than the exception, and rightly so. Excluding the danger of hidden variables is just one advantage of such designs – in many cases, it is sensible to include several independent variables simply because all of them potentially have an interesting influence on the phenomenon under investigation, or because there is just one particular combination of values of our variables that has an effect. In the second part of this volume, there are several case studies that use CFA and that illustrate these possibilities. One word of warning, however: the ability to include a large number of variables in our research designs should not lead us to do so for the sake of it. We should be able to justify, for each dependent variable we include, why we are including it and in what way we expect it to influence our independent variable.

6 It should be noted that the Bonferroni correction is extremely conservative, but it has the advantage of being very simple to apply (see Shaffer 1995 for an overview corrections for multiple testing, including many that are less conservative than the Bonferroni correction).

7 Note one important fact about multi-dimensional contingency tables that may be confusing: if we add up the expected frequencies of a given column, their sum will not usually correspond to the sum of the observed frequencies in that column (in contrast to two-dimensional tables, where this is necessarily the case). Instead, the sum of the observed and expected frequencies in each slice is identical.